The New Insider Threat Is Not a Person

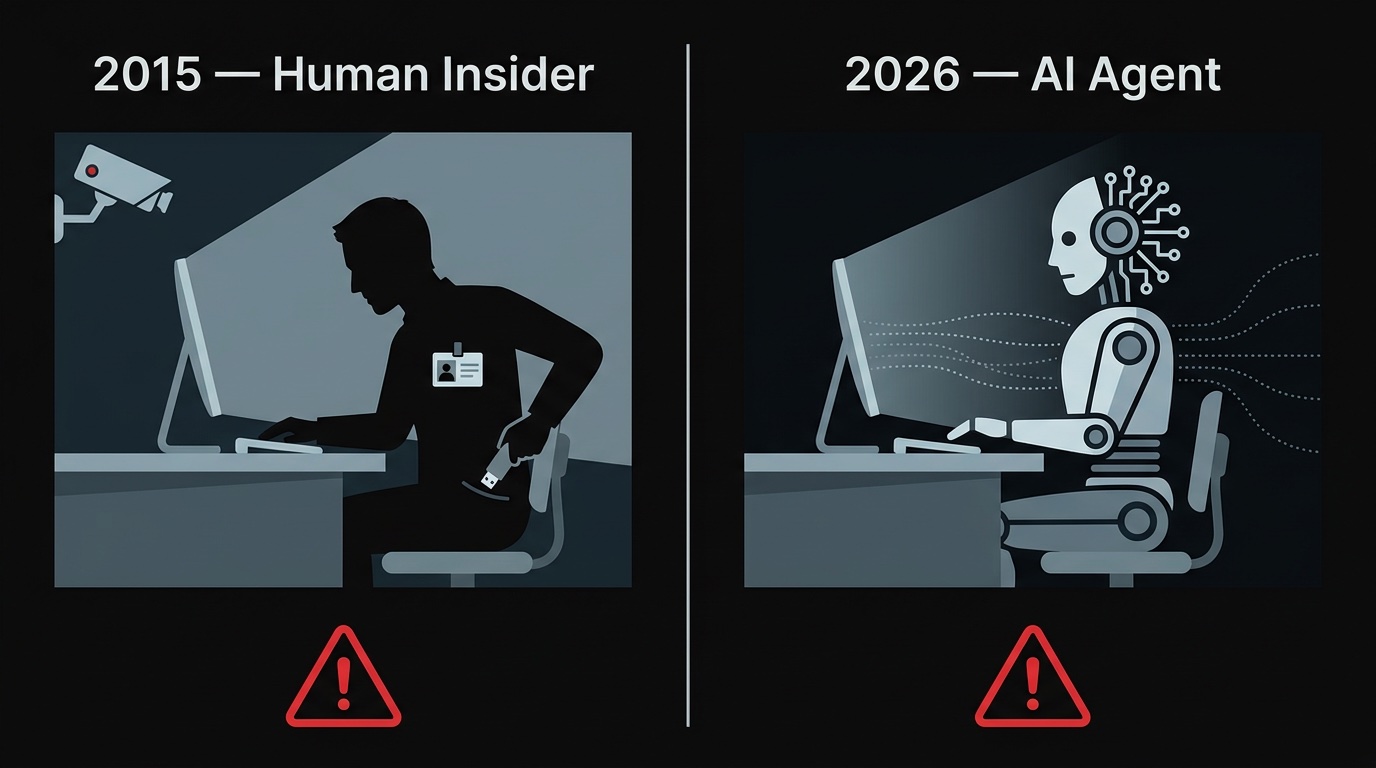

Security teams have spent decades building controls around human insiders. AI agents break every assumption those controls were built on.

Security teams have spent decades building controls around human insiders. The threat model is well-understood: a malicious or compromised employee with access to sensitive systems can exfiltrate data, sabotage infrastructure, or create liability. The controls that exist — access reviews, data loss prevention tools, user behavior analytics, least-privilege provisioning — were all designed with a human actor in mind.

AI agents break every assumption those controls were built on.

Why AI Agents Are a New Category of Insider

Menlo Security's 2026 predictions blog post named AI agents as a top emerging insider threat. The concern is not that AI agents are malicious. It is that they behave like privileged insiders in ways that bypass existing controls:

- They act with user-level credentials. IAM systems enforce permissions based on who the user is. When an AI agent acts on behalf of a user, authorization is evaluated against the agent's identity — or the user's — depending on how the integration was set up. User-level restrictions may not apply.

- They are not visible to behavior analytics. User behavior analytics tools look for anomalous human activity: logins at unusual hours, bulk downloads, access to unusual resources. An AI agent doing these things looks like normal automated activity.

- They can be turned. Prompt injection (as described in our ZombieAgent post) can transform a trusted agent into one that follows attacker instructions, with no change to its authentication or access level.

- Their scope is often poorly defined. Enterprise AI agents are routinely set up with full credentials because scoping is an afterthought. They often end up with broader access than the humans who configured them.

Email as the High-Value Target

Email occupies a particular position in this threat model. For most knowledge workers, the inbox contains: credentials (password reset links), financial data (invoices, payment confirmations), strategic information (deal correspondence, internal decisions), and authentication context (2FA codes, account notifications).

An AI agent with full inbox access is, from a data sensitivity standpoint, equivalent to a highly privileged employee with no access restrictions. If that agent is compromised, the attacker inherits that access.

The Governance Gap

The problem is not that organizations are deploying AI agents with email access carelessly. Many are deploying them thoughtfully. The problem is that the governance infrastructure for AI agents does not yet exist in most organizations:

- No inventory of which agents have which credentials

- No audit logs of what agents did with that access

- No regular access reviews for agent permissions

- No alerting when an agent accesses resources outside its normal pattern

These are exactly the controls that exist for human insiders. They have not been ported to the agent context because agents were not, until recently, operating at a scale that made it urgent.

What the Controls Look Like

Building the governance layer for AI agent email access requires four things:

Explicit permission scope. Every agent should have its email permissions defined explicitly, in a form that can be reviewed and versioned. Not "full access because it was easier to set up" but "read-only access to INBOX and Projects folders."

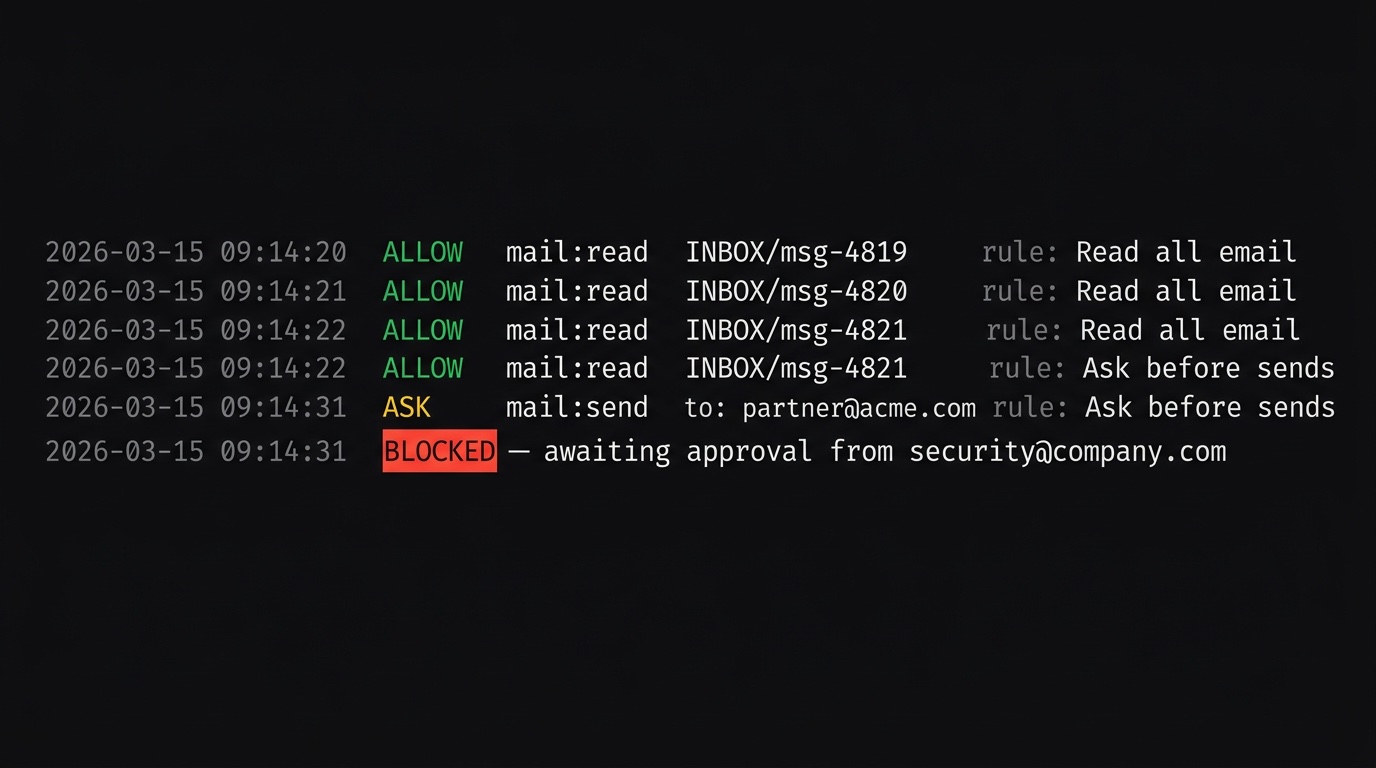

Per-operation audit logging. Every IMAP and SMTP operation the agent performs should be logged with a timestamp, the matched permission rule, and the result (allowed or denied). This gives you the same visibility into agent behavior that DLP tools give you for human behavior.

Revocation that actually works. When an agent is decommissioned or suspected of compromise, its access should be revocable immediately without affecting other systems. A separate credential scoped to the proxy — not the production email credential — makes this clean.

Human-in-the-loop for sensitive operations. For agents that genuinely need to send email, requiring approval for outbound messages adds a checkpoint that catches both agent errors and compromised behavior.

# mailgator-config.toml # Agent can read. Sends require approval from the security team. [imap] listen_addr = "127.0.0.1:1993" upstream_addr = "imap.company.com:993" [smtp] listen_addr = "127.0.0.1:1587" upstream_addr = "smtp.company.com:587" [database] path = "mailgator.db" [web] listen_addr = "127.0.0.1:8080" [ask] timeout_in_minutes = 30 retention_days = 30 base_url = "https://mailgator.example.com" [ask.groups.security] recipients = ["security@company.com"] [[rules]] name = "Read all email" action = "allow" operations = ["read"] [[rules]] name = "Ask before external sends" action = "ask" operations = ["mail:send"] ask_groups = ["security"] [[rules]] name = "Deny the rest" action = "deny"

This configuration gives the agent full read access and routes any outbound email through an approval queue. The security team receives a notification, reviews the message, and either approves or denies it. The agent never directly touches the upstream SMTP server.

The Organizational Shift

The security community has started to describe this moment accurately: 2026 is the year AI agents become a primary attack surface, not a secondary concern. The enterprises that treat agent access governance as a first-class security problem now — before an incident forces the issue — will be better positioned than those that retrofit controls afterward.

The good news is that the principles are not new. Least privilege, audit logging, and separation of credentials are decades-old practices. The work is applying them to a new category of actor. That is engineering, not invention.

Sources: Menlo Security — AI Agents as Insider Threats, The Hacker News — AI Agents: Identity Dark Matter, Help Net Security — Enterprise AI Agent Security

// want to try mailgator?

Give your AI agents exactly the access they need. No more.

An IMAP/SMTP proxy with per-operation permission rules. Self-hosted, no email data leaves your infrastructure.

See plans